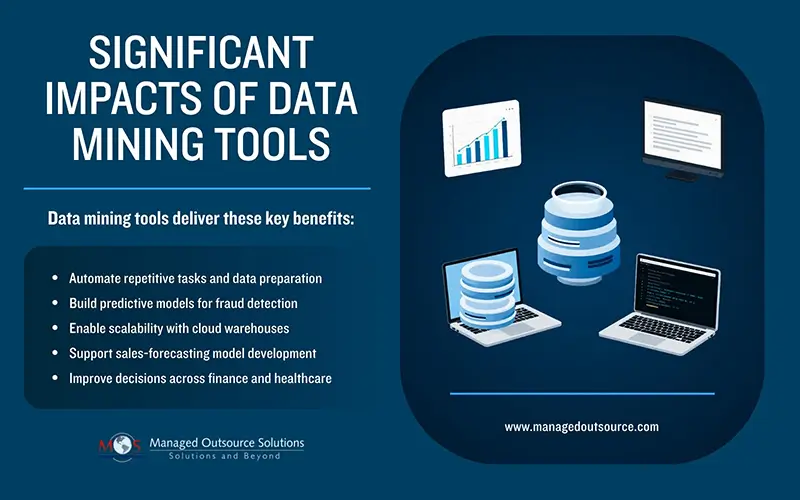

Data mining tools and software form the basis of smart business decisions. Companies no longer rely on guesswork. They use structured data mining techniques to uncover patterns, predict trends, and act on reliable information. Modern tools automate cleaning, transforming, and analyzing large files. They cut manual effort and speed up results. With the right tools and software, businesses can detect customer behavior shifts, prevent fraud, and optimize operations more efficiently.

Need help choosing data mining tools? Get expert guidance now.

Data Mining Tools and Software for Modern Businesses

Modern data mining tools and software cover everything from data preparation and visualization to advanced machine learning and deployment. Instead of spending weeks writing custom scripts, teams can use drag-and-drop interfaces, pre-built workflows, and cloud-ready platforms.

These tools help organizations prepare raw records by removing duplicates, handling missing values, and standardizing formats. They also help discover hidden patterns through clustering, classification, and association rules. Finally, they put predictive models into production so marketing, finance, and operations can act on real-time outputs. As a result, many companies now treat data mining as a core business function.

Key Features to Consider

When choosing data mining tools and software, businesses should focus on practical capabilities rather than marketing claims. The most useful features include:

- Visual workflow design: drag-and-drop interfaces that let teams build pipelines without heavy coding

- Integrated data preparation: tools that clean, merge, and reshape data before analysis

- Machine learning and AI support: built-in algorithms for classification, regression, clustering, and time-series forecasting

- Scalability and cloud integration: support for large files and distributed environments such as cloud warehouses

- Collaboration and governance: role-based access, model versioning, and audit trails for compliance-sensitive industries

Without these features, teams find even advanced tools difficult to maintain over time.

Popular Data Mining Tools in 2026

Several tools lead the data mining and analytics space in 2026. Many of them combine data preparation, modeling, and visualization in a single environment. Teams no longer switch between separate applications.

Altair RapidMiner

Altair RapidMiner remains one of the most popular platforms for both business users and analytics scientists. Its visual workflow designer enables teams to:

- Import data from databases, spreadsheets, and cloud sources

- Clean and transform data using built-in operators

- Apply machine learning models and compare performance metrics

Altair RapidMiner supports both no-code workflows and Python/R scripting, making it ideal for organizations growing from basic to advanced analytics.

KNIME Analytics Platform

KNIME is an open-source data analytics platform that focuses on modular workflows. Users connect nodes for data input, transformation, modeling and visualization, creating reusable pipelines. Businesses such as financial institutions and healthcare providers use KNIME for:

- Merging customer data from multiple sources into a single view

- Building scoring models that flag high-risk accounts or high-value leads

In practice, KNIME helps teams document exactly how they clean and transform data. Teams gain transparency and simplify regulatory compliance. Many organizations also extend KNIME with Python and R scripts when they need custom algorithms.

IBM SPSS Modeler

IBM SPSS Modeler continues to be a strong choice for enterprises that already use IBM’s analytics stack. It follows the CRISP-DM lifecycle, guiding users from data understanding to deployment and monitoring. Typical applications include:

- Banks using SPSS Modeler to create credit-risk scores and fraud-detection models

- Insurance companies segmenting policyholders based on claims history and demographics

The platform provides a rich set of statistical and machine-learning methods, including decision trees, logistic regression, and clustering. It integrates with IBM’s broader analytics and AI ecosystem, so it suits large organizations that need tight governance and audit capabilities.

SAS Enterprise Miner

SAS Enterprise Miner targets statisticians who work with complex, regulated datasets. Its graphical interface enables users to build end-to-end workflows using the SEMMA (Sample, Explore, Modify, Model, Assess) methodology. Common use cases include:

- Energy companies using SAS Enterprise Miner to forecast demand and optimize grid operations

- Government agencies running predictive models to detect fraud in tax filings or social-benefit programs

The platform’s strength lies in its statistical accuracy, built-in validation tools, and support for a wide range of algorithms. However, it usually requires more technical expertise and a higher budget compared to no-code options.

Alteryx Designer

Alteryx Designer focuses on data preparation, blending, and lightweight modeling. Many business analysts use Alteryx Designer to:

- Combine records from Excel, CRM systems, and cloud warehouses

- Create repeatable workflows that prepare data for visualization in tools like Tableau or Power BI

By automating repetitive tasks, the software frees analysts to spend more time on interpretation and decision-making.

Apache Spark

Apache Spark is a multi-language engine for large-scale data processing and analytics. It supports SQL, Python, Scala, and Java, making it common in business environments that deal with terabytes or petabytes of records. Typical applications include:

- E-commerce platforms using Spark to analyze clickstream data and personalize product recommendations

- Streaming-analytics systems that process real-time logs, sensor data, or financial transactions

Apache Spark runs on clusters and integrates with cloud platforms such as Databricks and AWS EMR, making it ideal for organizations needing speed and scalability.

Open-Source and Programming Options

Several organizations rely on programming-centric tools that support data mining workflows:

- Python with libraries such as Pandas, NumPy, Scikit-learn, and TensorFlow: widely used for exploratory analysis, machine learning, and deep learning

- Weka: a free, open-source tool for experimenting with machine-learning algorithms and building classification or clustering models

- Orange: a visual-programming environment that blends data mining, visualization, and basic machine-learning tasks

Teams in academic, research, and startup environments choose these options where flexibility and cost matter more than pre-built interfaces.

Real-world Business Cases

Actual use cases show how data mining tools and software create practical benefits. Here are a few examples:

E-Commerce and Recommendation Engines

Large e-commerce platforms use data mining to power recommendation systems. For instance, Amazon analyzes past purchases, browsing history, session behavior, and search queries. By applying clustering and association rules, these platforms suggest products that customers are more likely to buy. That improves conversion rates and average order values without intensive manual intervention.

Fraud Detection in Finance

Banks and payment gateways deploy data mining to detect suspicious transactions. Typical workflows include analyzing transaction amounts, locations, times, and device fingerprints. Teams use classification models to flag transactions that deviate from normal patterns. For example, a major bank used SAS Enterprise Miner to detect credit card fraud, reducing false positives by updating models with new information on a weekly basis. It improved both security and customer experience.

Retail and Inventory Optimization

Retail chains use data mining to forecast demand, optimize stock levels, and plan promotions. A grocery chain applies time-series models to predict sales for each store and product category. Meanwhile, a fashion retailer uses clustering to group products by season and sales velocity. It then adjusts markdowns accordingly. These models prevent overstock and out-of-stock situations, which directly affect revenue and customer satisfaction.

Healthcare and Patient Outcomes

In healthcare, data mining helps organizations improve patient outcomes and reduce costs. Hospitals use predictive models to identify patients at high risk of readmission, while public-health agencies analyze anonymized data to detect disease outbreaks. By integrating clinical records, claims data, and social risk factors, providers can design more targeted interventions.

Choosing the Right Tools

Selecting the right data mining tools and software depends on three main factors: team skills, data volume, and business needs. Small teams and non-technical users benefit from visual tools like RapidMiner, KNIME, or Alteryx, which minimize the need for coding. Data science- led organizations may prefer platforms like SAS Enterprise Miner or Python-based stacks that offer more control over algorithms and experiments.

Organizations should also consider:

- How well the tool integrates with existing data sources (cloud warehouses and CRM systems)

- Whether the vendor supports compliance standards such as GDPR or HIPAA

- The total cost of ownership, including licensing, infrastructure, and training

Running a small pilot on one high-impact use case such as customer loss prediction or demand forecasting can help teams evaluate whether a tool fits their workflow before committing at scale.

When to Outsource Data Mining

Not every company has the internal resources to build and maintain data mining systems. This makes outsourcing services a practical option for accessing specialized skills and proven workflows. A mid-sized retailer might outsource the development of a sales-forecasting model while keeping day-to-day reporting in-house so that internal teams still control business decisions. In a similar way, a healthcare provider might partner with a vendor that offers data mining services. The vendor builds and maintains risk-prediction models, then refines the results with input from internal clinicians.

This approach helps businesses get started quickly without hiring a full data-science team upfront. They still need to maintain strong data governance, protect privacy, and ensure clear communication with external partners.

The Path Forward

Data governance stays central to protect privacy and ensure reliable results. Clear vendor communication builds on this by preventing gaps in workflows or model accuracy. Businesses then balance in-house control with external expertise to avoid common pitfalls like overbuilt systems or mismatched priorities. This approach turns records into a strategic advantage. Companies scale steadily, cut unnecessary costs, and drive decisions with confidence. The path forward starts with one targeted project that proves value and builds momentum.