Running a business with dirty data is like navigating a ship with a broken compass—it inevitably leads to lack of direction, waste of resources, and operational chaos.

IBM estimates that poor data quality costs the U.S. economy about $3.1 trillion each year. As data volumes explode, delays in fixing data inaccuracies, and inconsistencies leads to compounding errors, resulting in flawed analytics, poor decision making and even severe compliance violations. Most businesses rely on outsourced solutions for data quality management. This is where artificial intelligence (AI) is playing a critical role. By integrating AI in data cleansing services, advanced outsourcing services automate error detection, significantly reduce processing time, and improve the accuracy and consistency of datasets, leading to more informed decision-making and enhanced business outcomes.

Inaccurate data leads to slower decision-making and lower employee productivity. Data cleansing through automation (AI or machine learning) would reduce time spent on manual data corrections, according to Trifacta. This post examines the perils of dirty data and how AI speeds up data cleansing processes and boosts accuracy.

The Cost of Dirty Data

Dirty data refers to information that is flawed in various ways, such as being outdated, incomplete, inaccurate, inconsistent, or insecure. It includes errors like duplicate records, misspelled addresses, missing field values, outdated phone numbers, and incorrect client information. These quality issues can severely impact decision-making and operational efficiency.

Up to 92% of companies see dirty data impacting customer satisfaction, which shows why data quality matters beyond just business operations. In financial institutions, incomplete KYC (Know Your Customer) records and outdated addresses result in regulatory penalties, account freezes, and customer complaints. Several global banks have been fined millions for poor data governance tied to inaccurate customer records.

In healthcare, 25% of healthcare data is as inaccurate or incomplete, according to HealthIT.gov, leading to potentially life-threatening errors. Hospitals often have duplicate or mismatched patient records due to spelling errors, missing DOBs, or inconsistent formats. A study in Medical Economics found that the average healthcare organization experiences a 10% EHR duplication rate, with some institutions reporting duplication of up to 18%. Duplicate and mismatched records result in errors in administering medications or treatments, delayed care, and insurance claim denials.

Regardless of your industry, using artificial intelligence for data cleaning speeds up quality improvement while reducing errors, minimizing rework, and boosting operational efficiency.

High-Speed AI-Powered Data Cleansing: Solving Traditional Challenges

Modern organizations handle massive volumes of structured and unstructured data, making manual cleansing inefficient and error-prone. Manual data cleansing is highly time-consuming, error-prone, and difficult to scale, often resulting in significant operational delays and costs. It struggles with large volumes, complex inconsistencies, and errors caused by human judgment, resulting in unreliable and low-quality data. Legacy software also falls short in handling large datasets or complex errors, compromising data integrity.

Enter AI — the game-changer in data cleansing. Automating data validation with AI improves accuracy, reduces manual effort, and ensures consistent data quality across systems.

How AI in Data Cleansing Works

AI, machine learning, and natural language processing automate and enhance data quality processes by identifying patterns, detecting anomalies, and standardizing information at scale.

- Artificial Intelligence (AI) for error detection

- Automated data validation and consistency checks

- Duplicate detection and record matching

- Format standardization (dates, addresses, currencies)

- Real-time error flagging

- Machine Learning (ML) for Pattern Recognition and Anomaly Detection

- Identifying outliers and unusual data behavior

- Predicting missing values

- Improving record linkage across multiple sources

- Enhancing duplicate detection accuracy

- Natural Language Processing (NLP) for Unstructured Data Cleaning

- Text normalization and keyword extraction

- Entity recognition (names, locations, medical terms)

- Removing irrelevant or redundant text

- Standardizing free-text entries

:

AI-powered systems use rule-based logic combined with intelligent automation to streamline repetitive data quality tasks. These systems can automatically validate data fields, flag inconsistencies, detect duplicates, and enforce formatting standards. AI also enables real-time monitoring, ensuring data remains clean as new records are ingested. Key applications include:

Machine learning models learn from historical data to recognize normal patterns and identify irregular or suspicious entries. Unlike traditional rule-based systems, ML adapts over time, improving accuracy as more data is processed. Common ML use cases include:

NLP enables systems to understand and process text-based data such as customer feedback, clinical notes, emails, and documents. It helps extract relevant information, standardize terminology, and remove noise from unstructured datasets. NLP applications include:

When AI, ML, and NLP work together, it results in faster processing, higher accuracy, and improved scalability. Companies using AI tools for data cleaning report that they can clean datasets up to 5 times faster than traditional methods, notes TechRepublic.

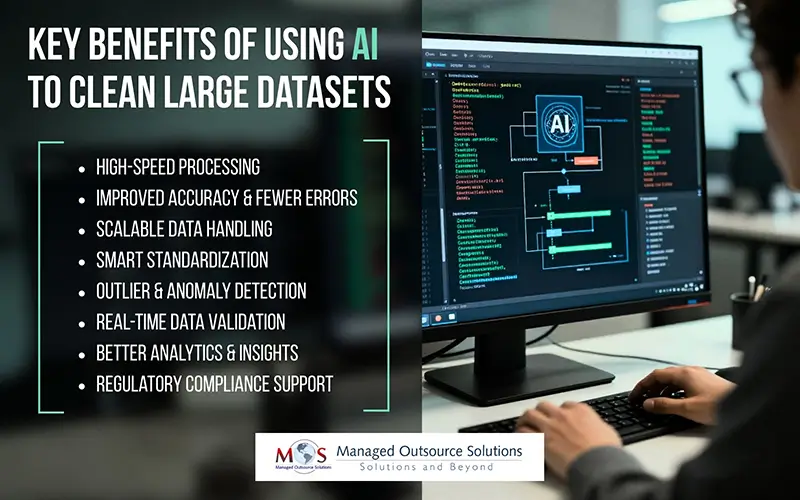

Key Benefits of Using AI to Clean Large Datasets

AI efficiently handles the tasks of removing duplicates, identifying anomalies, and standardizing, formatting, and filling in missing values, making it essential for processing large datasets.

- Speed: Automating routine, repetitive data cleaning through advanced algorithms, processing millions of records in minutes rather than days, which lowers operational costs and reduces human labor.

- Improved Accuracy and Error Reduction: Automatically identifies errors in datasets (e.g., duplicates, inconsistencies, incorrect entries) using machine learning, minimizes anomalies that might be missed manually.

- Scalable Processing: Effortlessly handles large, complex, and growing datasets from multiple sources, making it ideal for big data applications.

- Intelligent Standardization and Normalization: Detects varied formats (e.g., “SF” vs. “San Francisco”) and automatically standardizes them, improving data consistency across systems.

- Outlier Detection: can flag anomalies in data that don’t match expected patterns, improving data quality.

- Real-Time Data Validation: can validate data instantly as it is entered or updated, ensuring that only high-quality data enters the database.

- Better Data Insights and Performance: Improves the performance of predictive models, leading to more accurate analytics and better business decision-making.

- Improved Regulatory Compliance: Ensures data integrity, helping organizations comply with regulations like GDPR, CCPA, and HIPAA.

As an example, let’s look at the impact of AI in improving data quality and accuracy in healthcare.

Healthcare organizations manage large volumes of patient records from EHR systems, labs, billing platforms, and insurance providers. These datasets often contain duplicates, inconsistent formats, and incomplete information, which can cause claim denials, delayed care, and compliance risks.

By applying AI technologies, healthcare organizations can automatically match duplicate patient records, standardize demographic and clinical data, extract key information from unstructured physician notes, and validate insurance details in real time. Automated tools can also detect incorrect procedure codes or missing fields, and normalize medical terminology across documentation.

AI in Data Cleansing: Challenges, Considerations, and Future Trends

Using AI data cleansing tools offers speed and scalability, but presents significant challenges:

- Data Privacy: It’s important to ensure that AI tools comply with data privacy regulations (GDPR, HIPAA).

- Potential for Misinterpretation: Automated tools can misinterpret complex, unstructured, or context-heavy data.

- Integration Issues: It can be challenging to integrate AI solutions with existing data pipelines and systems.

- Training: High-quality data is essential to train the AI and avoid “garbage-in, garbage-out” problems

- Cost: AI-driven data transformation involves high initial setup costs.

Despite these challenges, AI is here to stay, with continued advancements expected to further improve data quality, accuracy, and processing speed. As automation capabilities mature, data cleansing workflows may become largely hands-free, saving time and reducing operational costs. At the same time, deeper integration between AI, cloud computing, and big data analytics will create smarter, more scalable data management ecosystems that support real-time insights and faster decision-making.

Given the need to manage huge volumes of data in quick turnaround time, AI in data cleansing has become essential for ensuring accuracy and efficiency. Many businesses are now partnering with data cleansing companies to access advanced technologies and implement best practices for using AI in data cleaning processes, avoiding the cost and complexity of building in-house systems. By leveraging expert teams and AI-powered tools, businesses can improve data accuracy, reduce processing time, and scale operations quickly. Outsourcing also ensures continuous monitoring and compliance support, allowing internal teams to focus on core business activities while maintaining high data quality standards.

Achieve faster turnaround times with guaranteed quality with our AI-driven data cleansing solutions.